Don't delegate the cognitive struggle

Summary

Knowledge work isn’t only about producing outputs. An LLM-powered, output-centric way of working seduces us into mistaking speed for productivity. By delegating the cognitive struggle, we dull our intellect, atrophy our hard-earned skills, and settle for mediocre outcomes. At the team and organisational level, this mindset intensifies work as we kick the AI-workslop can downstream.

When I joined Thoughtworks 19 years ago, many colleagues encouraged me to be “metacognitive”; i.e., to be thoughtful about how I think. Over the years, I’ve taken that advice to heart, and as we sit perched at the apex of the AI bubble, I think about that word even more.

The genius of LLMs is that, in many cases, they seem impressive if you don’t have the expertise and are a pain in the wrong place if you’re skilful. A recent MIT study backs up this theory, though it also claims that AI performance is “high and improving rapidly across a wide range of tasks.” As of late 2025, LLMs were able to complete human-level tasks only at a 65% success rate, and the study predicts that by 2029, they’ll be able to complete text-related tasks at a success rate of 80-95% at “minimally sufficient quality.”

Ok. Breathe. Take that information in. Breathe out. Yes, they said, “minimally sufficient quality”. That’s a C-minus score at school; the kind of work that gets you a “meets expectations with support” appraisal at work. Oh, and the prediction only applies to “text-related” tasks. Surely both AI boosters and doomers should cool their jets a bit, shouldn’t they? Ah well! A man can hope.

And yes, 2029. That’s dog years in tech. Who knows if OpenAI makes it until then? Who knows what happens to Anthropic by then? We seem to think of the generative AI industry as a perpetual motion machine that’ll go on forever. But infinite growth on finite capital and even more finite natural resources is mathematically impossible, though markets pretend otherwise.

What I am counting on is that high-end knowledge work isn’t going anywhere. Judgement, skill, taste, and metacognition will matter even more than ever before. And that’s why I’m concerned about how much we’re delegating our work to these word-guessing machines.

The truth about knowledge work

The hard thing about hard things is never the hard thing. To the uninitiated, it may seem that the hardest part of building software is writing code. If that were the case, we’d never have had pair programming. Similarly, the hardest thing about product management isn’t creating roadmaps and product requirements documents. The hardest thing about design isn’t creating mockups. Slides aren’t the hardest part of crafting a presentation. The final document isn’t the hardest thing about writing. School isn’t about submitting homework. Reading isn’t the same as gleaning a book summary.

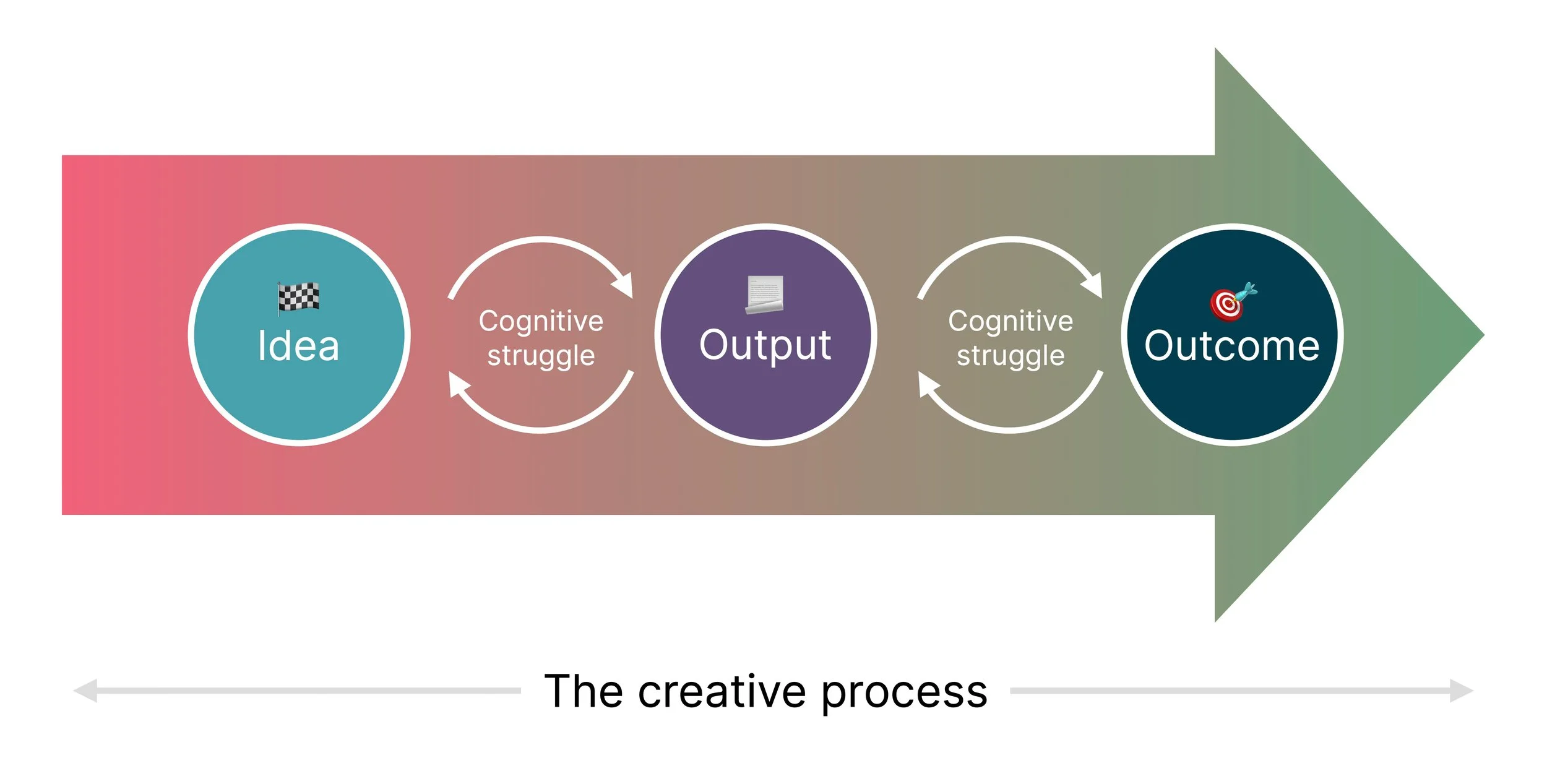

You can decompose each of these hard things into four constituent parts.

The starting point or idea.

The process of problem solving.

The output or artefact from the process.

The outcome that the process and output achieve.

The cognitive struggle is an integral part of the creative process

Let’s take writing as an example. Whether good or bad, every piece of human-crafted writing involves a cognitive struggle. Most people my age will recognise the familiar trope of a writer battling writer's block, shredding many a draft and turning many a page into a paper ball before achieving a flow state. Thinking through the structure of an essay requires wrestling with our thoughts as we produce our words. We type. We delete. We rephrase. We structure. We restructure. We critique our writing. We sleep on our first draft and wake up the next day with fresh ideas. The cognitive struggle is as much in service of the output as it is an exercise for our brains. It’s what keeps us sharp.

Skilful writers are in command of this writing process and can craft outputs that achieve desired outcomes with their readers. The same logic applies to skilful engineers, designers, presenters, and storytellers. The process is integral to the output and the outcome.

Outputs aren’t the end-all

The biggest fallacy about knowledge work, then, is treating the output as the end-all. Summon a stochastic parrot. Ask it to generate some mockups. Design? Done. Ask an LLM wrapper to generate slides. Presentation? Done. Run your homework assignment through a chatbot. School? Done. Upload an EPUB to NotebookLM and generate a summary. Reading? Done.

No. Not done.

Design is about solving user problems. Presentations are about telling a compelling story. School is about learning. The point of school surely isn’t to feed a homework goblin. And reading? Reading is joyful, educational, and mentally challenging all at once. Using LLMs to escape the cognitive struggle erodes our cognitive capacity. Much like muscles that atrophy through disuse, our cognitive capacity will also suffer as we delegate “thinking” to LLMs.

The perils of output-centricity

The Wall Street Journal and Harvard Business Review have both reported that LLMs don’t reduce work – they intensify it. Every executive is under pressure to “adopt AI,” even if their people don’t see a need for it. The most performative way to adopt AI is to create more outputs. In a time where we mistake activity for productivity, it’s easy to laud each other for extracting passable artefacts from LLMs. If your company provides access to platforms like Glean, UX Pilot, Replit, OpenClaw or even subscriptions to Perplexity or Claude, producing outputs is the easiest part. Dealing with them is the hard bit.

As each person in a workflow generates new outputs and kicks the AI-workslop can to someone downstream, they add to the team’s cognitive load. It’s not even just about reading and acting on an AI-generated deep research report or reviewing AI-generated code. It’s about dealing with a higher level of entropy in the system – change management, release management, decision-making, communication, and coordination – everything becomes more intense and more brittle.

It’s no wonder, then, that the WSJ piece found the use of email, messaging, and chat apps has doubled, while the time for focused work to solve complex problems has fallen by 9%. Cal Newport characterises this shift as a “worst-case scenario.” Individual tasks happen faster – you can keep reaching for that generate button – but when did speed and productivity become the same thing?

Embracing the struggle

I hope that someday soon, the LLM bubble bursts. Coding is probably the only area where LLMs have found real product–market fit, where the labs can plausibly find users willing to pay the true cost of these services. Even there, fine-tuned SLMs could be a viable alternative, so I’m not convinced how much of this technology will be around in a few years.

But let’s assume that this circus continues forever. Maybe Claude Mythos finally becomes the mayor of San Francisco. In that scenario, too, if you see your job as merely producing outputs, you’ll be less valuable than someone who owns the process, outputs, and, most importantly, the outcome.

Since I’m not in the business of predictions anymore, let me share how I plan to protect my differentiation while the world loses its marbles over every Altman and Amodei shenanigan.

If I’m good at something, I’m not delegating that work to LLMs. I’ve honed my craft over several years to the extent that I’m several times better than the basic outputs an LLM produces. And I trust myself to drive to outcomes, far more than I trust a stochastic parrot.

To make time for the things I’m good at, I’ll use LLMs in the most cynical way possible. I’ll delegate all the boring, box-ticking, Dilbertesque work to generative AI tools. That way, I’ll also stay conversant with these tools for as long as it’s necessary.

I’m going to protect my colleagues from AI-induced intensity. It’s too easy to send someone an AI-generated artefact, without considering its downstream impact. So, if I’m unwilling to own an output, I’m not willing to foist it on someone else.

To be clear, sometimes speed is necessary to achieve an outcome. If LLMs offer speed in those circumstances, I will adopt them. Speed of outputs, however, isn’t always the bottleneck for a meaningful outcome, or a reliable proxy for productivity.

Most importantly, though, I’ll embrace the cognitive struggle of facing a blank page, screen, or canvas and the creative frustration I feel in these circumstances. I don’t have to choose pseudo-productivity. I’ll hone my metacognition and creative process instead.